so i wanted to do an update to my game ZeroVector for the 2018 Christmas holiday (and one year anniversary of its original release) buuuut i got started on it way late and bit off too much work so it wasn’t actually until May 1st that i actually got it out. i think it came out pretty cool though! 🙂

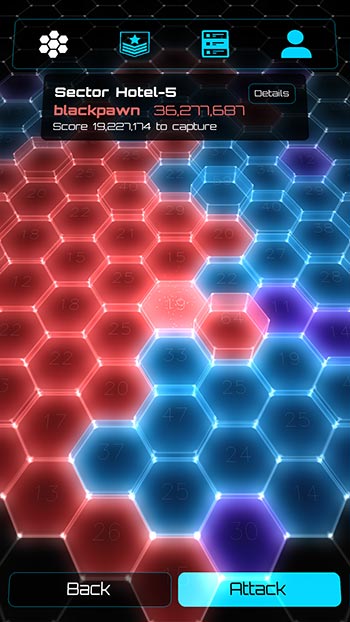

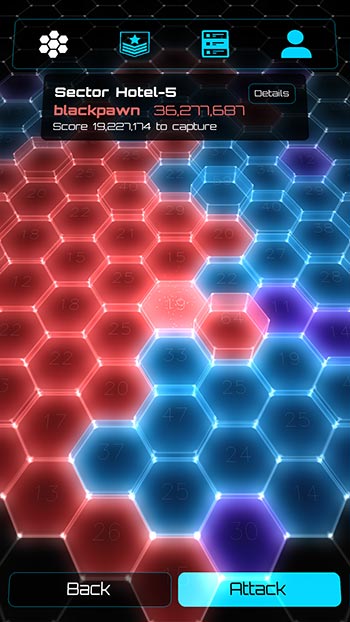

a major part of the update is a new multiplayer “Conquest” mode. in it, everyone is assigned randomly to the blue, red, or purple faction and then compete to capture sectors on a hexagonal map. this gives an extra fun dimension to the game besides just a single score attack leaderboard. the stages in each sector are also different and sectors can have “mods” applied to them so there’s extra variety to play.

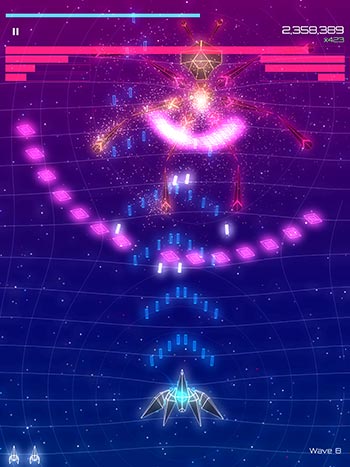

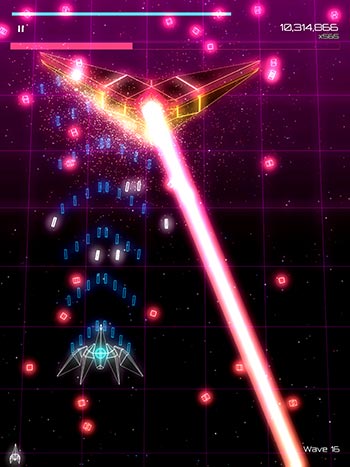

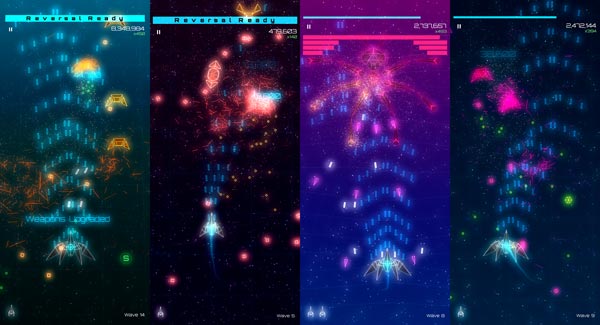

with the update, there’s lots more game content added. along with new enemy ships and firing patterns there are also two new bosses to fight! big thanks to Kirill for helping me out with 3d meshes for the spider and stealth bomber and some other cool enemies like the stingrays.

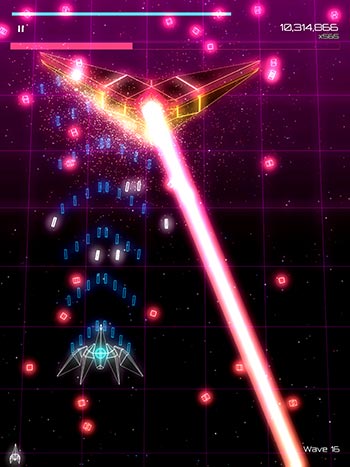

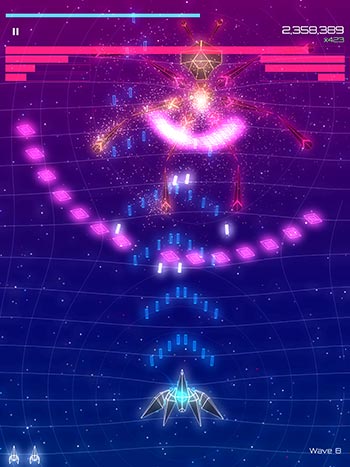

the game uses a custom 3d engine and in 0.1 i had limited myself to drawing only lines. all the ships, bullets, effects, text, and UI just used single pixel width lines. this helped me keep things simple and focus on building out the gameplay instead of sinking all my time into fun graphics stuff. now with the 0.2 update i still go for the same retro vector display style but eased up on that lines only restriction. this meant fleshing out all the engine systems for fragment shaders, textures, font rendering, and such. also i implemented a simple animation system so that some enemies and bosses can have moving parts.

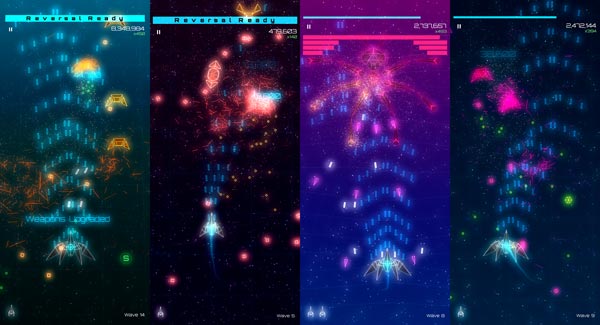

something i struggled with was making each stage feel different. i tried and failed a lot with different color schemes for the enemies, bullets, and backgrounds. i had to keep changing the rendering to make it possible for me to push the styles apart. hopefully it came out somewhat sufficient. i also try to have some different enemy types and unique firing patterns to differentiate the stages. for the backgrounds i built a new procedural system which can interact with the player, enemies, and triggers and the code came out quite cool but still falls short in the actual game. in future updates i want to make better use of it and have a million ideas still to explore with it.

another thing i needed to do for 0.2 was build out a proper UI system. in 0.1 i had gotten away with a crazy simple hard coded UI needing it only for the main menu and score screen. with the update there’s loads more UI especially with the Conquest mode. it’s still quite a simple system though with hierarchy of controls in linear horizontal, vertical, and stack layouts. i render the text using pre-computed signed distance fields from the font glyphs and that works really nice for having text at many sizes easily.

overall, i built out all the different game systems a ton in this update so it’s now a lot easier to create new content for it. i think it’ll be fun to make some new content (enemy, firing pattern, stage, etc) each weekend and slowly build up a nice big set of content for the next major update while also releasing some to new modes and sectors in Conquest along the way. it’s fun having a shmup i can noodle with over time. 😀

if you haven’t tried it out, you can download ZeroVector for free from the iOS App Store and from Android Google Play. if you enjoy it be sure to visit the in game store and buy me a taco! it’s fun when people do that and i really do go out and celebrate with tacos from Taco Bell, Chipotle and Pepe’s 🌮 😍 🌮