2018-08-18

#generative

#experiment

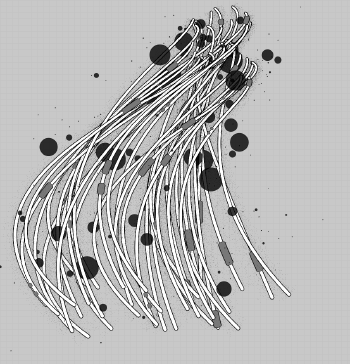

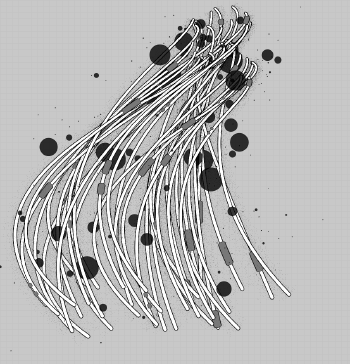

i made a little program that procedurally draws a bunch boxes with strokes that mimic hand drawn lines. i got a bit fancy with the occlusion and finding outlines and then producing an animation that builds up the lines in a way a human might.

the box arrangements are different every time it runs and come out looking like robot insects to me which i thought was kinda neat. 🐜 🤖 🙂

2018-07-22

#generative

#experiment

playing with html canvas to make generative mop wizards 🙃

it’s very slowwww, back to native code for me.

2017-11-07

#experiment

#tool

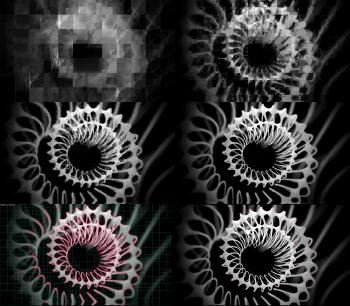

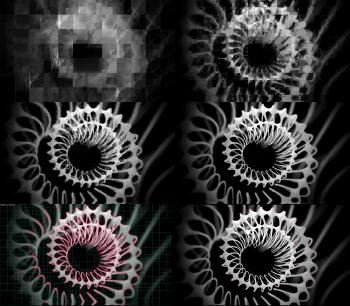

i made a little photoshop plugin for a reaction / diffusion simulation that’s

guided by a source image. i thought the effect would be cool or something but

it’s nothing to write home about. 😜

anyway, should anyone want it i posted it to: ReactDiffuse

2016-02-29

#experiment

there i was just minding my own business when from some forgotten corner of my mind INFINIMEX called out to me once again. so this past weekend instead of writing a novel or sorting my sock drawer like a normal human being would do, i set out to work on some upgrades for it.

i rewrote most of it making it more general so it can splat new content wherever i want and automatically determine what region is available for cutting through based on current contents, patch size, and how many laplacian pyramid blend levels are being used.

2016-01-10

#experiment

goodbye 2015. now in 2016 year i’m looking forward to NOT moving. what a pain that is!! :P

one of my goals last year was to ship PaintBot… well i didn’t make it. turns out to be quite difficult wrapping up a fun little experiment into a finished product.

i went through a bunch of iterations on the user interface - bouncing a few times between being too simple to get enough control over the output to being too complicated to expect any casual user to bother exploring.

2015-10-18

#experiment

i’m hopefully nearing in on a first release for a fun graphics toy i’ve been working on periodically that turns photos into cool digital artworks.

it’s called PaintBot and is a further evolution of my old strokerizer experiment (which itself grew out of glypherizer)

i made a website for it at paintbotapp.com so you can check out some early examples if you like and follow @paintbotapp on twitter for news as it approaches release!

2015-05-28

#experiment

playing around with encoding image as fractal and reconstructing

2015-01-11

#experiment

i’ve always wanted to code up and play with some of the texture synthesis algorithms out there since reading the Graphcut paper from Siggraph 2003. i remember thinking it was the coolest thing ever and wondering why it wasn’t being used for everything everywhere all the time. back then i found a Gimp plugin that purported to implement it but the results i got with it were absolutely awful.

anyway it came up while chatting with Kirill a few month back and led to me experimenting a bit with image quilting between matches of Hearthstone one sunday.

2011-12-28

#experiment

needing a decoration for halloween i decided to port my old fire skull flash experiment to the iPad. since i’ve been doing a lot of iOS coding recently it was just a couple hours to get it up an running nicely. then i wanted to spice it up a bit!

the first thing was a fancy new skull mesh. in the old flash experiment the skull was software rendered in ActionScript (doing vertex projection to 2d in script then using polygon bitmap fill to rasterize) so the mesh had to be pretty simple and was only 1,321 triangles.

2010-01-03

#experiment

so 16 years ago i started keeping a journal, 14 years ago i started saving all my source code, 10 years ago i started saving regular screenshots of my projects and since then have been accelerating the rate at which i store off snapshots from originally around one every month to by the end of 2009 one image a day and one video a week. this is quite a lot of data but the rate of technological advance in storage has far exceeded the increasing rate of data i store.

2009-10-23

#experiment

so i gave video strokerization a try and it turned out pretty cool. here you can see the nine inch nails march of the pigs music video strokerized:

nine inch nails march of the pigs strokerized! from blackpawn on Vimeo.

for this i told strokerizer it could only paint 64 brush strokes each frame so it didn’t get much chance to fill in fine details with the camera always moving around like crazy.

2009-09-20

#experiment

so previously i blogged about my glypherizer experiment to approximate images with a small number of font glyphs. unfortunately it didn’t meet with the fantastic success i had hoped. :P the ideas were still a bit itchy though so i wound up working some more on it. this time around i used images instead of font glyphs so they could be brush strokes or other crazy stuff in addition to text.

2009-08-10

#experiment

i was trying to make some cool stuff out of text glyphs and kinda failed. i thought: wouldn’t it be neat if i could reproduce an image using a small number of glyphs from wingdings or even a regular font? i’d only need to store the quantized position, rotation, scale, the glyph itself and some color. so i wrote a nicely threaded program to churn through the possibilities and find me some nice glyph placements.

2009-07-28

#demoscene

#experiment

my first iphone app has made it’s way to the app store! just search for “plasma effect” to find it in the store (or click here to open in itunes directly) and download it for free! the app is a modern implementation of the old school demoscene plasma effect. while running you can touch the screen to interact with the plasma and shake the iphone to switch color palettes and plasma shapes.

2007-10-26

#experiment

cool! the world famous mr. doob has linked to my fire skull experiment in his latest post! :) i’ve been continuing to learn more about actionscript 3 and develop a small 3d engine. performance has continued to be a tricky thing. i’m so used to thinking at a lower level and in terms of the sorts of optimizations you’d do in c++ instead of as3. writing fast code in as3 is actually trickier in many ways than c++ because it often means you have to mangle your code and manually inline and unroll loops which most c++ compilers just do for you automatically.

2007-09-15

#experiment

as an excuse to try out the flash flex ui system i coded a small activator inhibitor texture generator. it uses cellular automata to simulate pigment cells switching between differentiated and undifferentiated states based on competing densities of the activator and inhibitor chemicals. you can play with it here and here are some sample tiling textures i made with it so far:

i made textures like this before with reaction diffusion algorithms but those were much slower.

2005-05-28

#demoscene

#experiment

i was very thirsty today so i coded up some water. it requires a video card that supports pixel shaders 2.0 mostly because i'm lazy. thirst will do that to you, you know?

2005-05-15

#demoscene

#experiment

whipped up a quick test of using tg to make textures in small demos/intros. it's 4kguts.

the tg routines create a diffuse and normal texture that's then applied to an animated plane.